Sparse Estimation with the Swept Approximated Message-Passing Algorithm

A. Manoel, E. W. Tramel, F. Krzakala, & L. Zdeborová

Astract

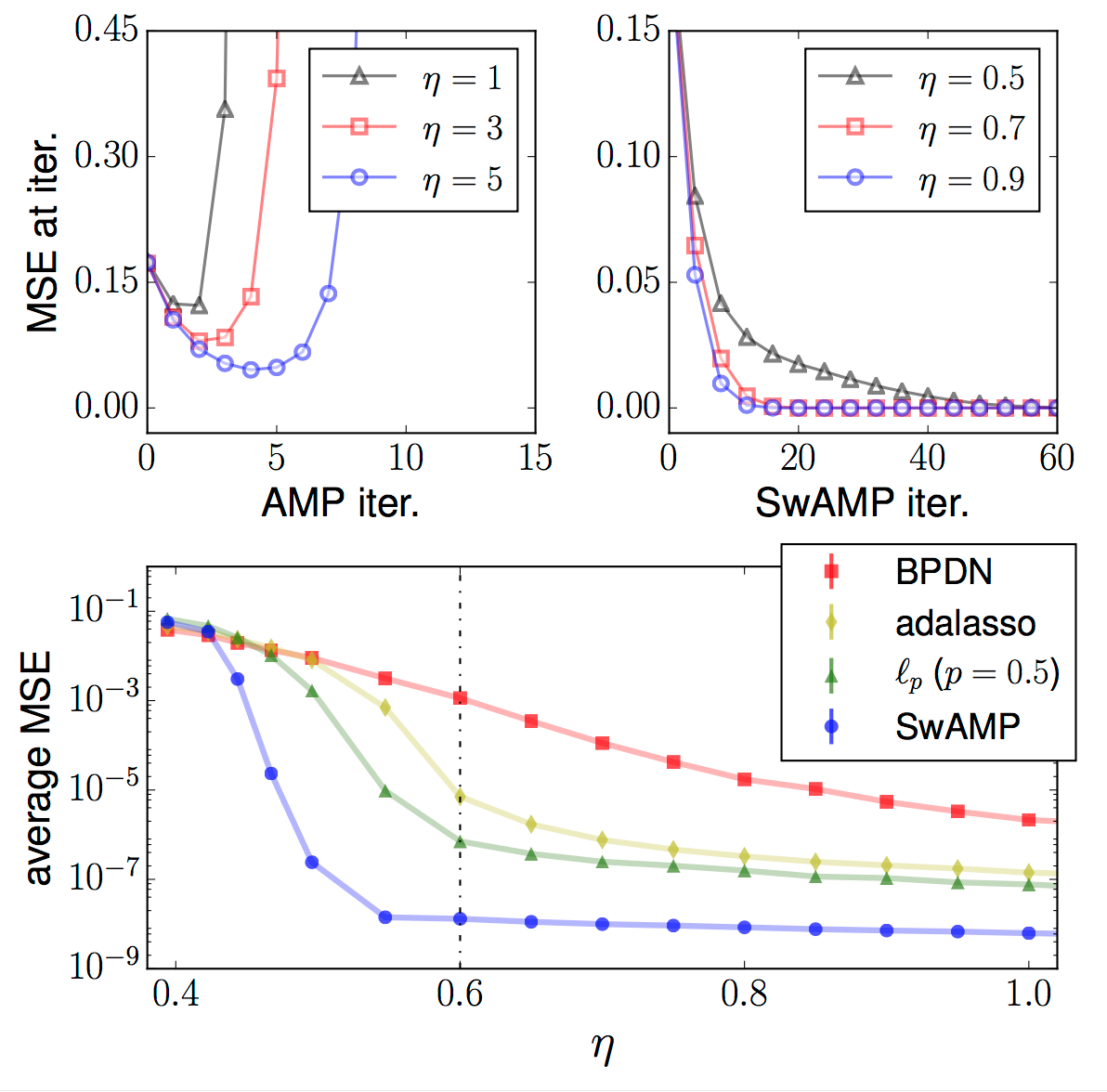

Approximate Message Passing (AMP) has been shown to be a superior method for inference problems, such as the recovery of signals from sets of noisy, lower-dimensionality measurements, both in terms of reconstruction accuracy and in computational efficiency. However, AMP suffers from serious convergence issues in contexts that do not exactly match its assumptions. We propose a new approach to stabilizing AMP in these contexts by applying AMP updates to individual coefficients rather than in parallel. Our results show that this change to the AMP iteration can provide expected, but hitherto unobtainable, performance for problems on which the standard AMP iteration diverges. Additionally, we find that the computational costs of this *swept* coefficient update scheme is not unduly burden- some, allowing it to be applied efficiently to signals of large dimensionality.

BibTeX

conference{mtk2015,

Address = {Lille, France},

Author = {Andre Manoel and Eric W. Tramel and Florent Krzakala and Lenka Zdeborov\'{a}},

Booktitle = {Proc. Int. Conf. on Machine Learning (ICML)},

Month = {July},

Title = {Sparse Estimation with the Swept Approximated Message-Passing Algorithm},

Year = {2015}}

Related Posts

- Increasing acceptance of AI‐generated digital twins through clinical trial applications

- Digital twin generators for disease modeling

- Boosting Clinical Trial Power in Parkinson's disease (PD) with AI-Generated Digital Twins

- Semi-supervised federated learning for keyword spotting

- Federated learning for predicting histological response to neoadjuvant chemotherapy in triple-negative breast cancer